Digital utilities: And the data beat goes on

Modern, digital utilities have a challenge managing the data they create. Learn how Software AG can help by connecting utility digital operations. .

Modern, digital utilities have a huge challenge managing the deluge of data that they create.

Utility data management is one of the least-discussed challenges facing utilities today. My first experience with this was when I worked at a west coast utility during the implementation of a large (multi-million end points) smart meter deployment.

While we had planned for the storage of data that the system generated at full deployment, we were surprised when it happened. Surprised in two ways: One, watching how a data center had to grow simply to address storage requirements. Two, hearing the immediate next question: “Once you have all that data, what do you do with it?”

Massive amounts of data

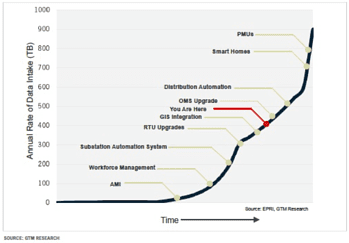

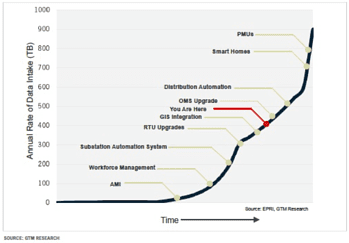

Why were we surprised? The massive amount of data that was generated continued to grow. One of the best research documents about this was by Greentech Media. As the chart shows, more and more utility operational processes are being digitalized.

As this transition accelerates over time, the annual rate of data creation and storage increases dramatically. Not only does the data beat go on – it is accelerating at the rate of hundreds of Terabytes per year.

Managing the data tsunami

There are three critical questions that utilities must ask themselves before they can harness the value of data to its benefits:

- What is the right data?

- Is the data good quality?

- How does the data move?

- The right data

In a recent article published by T_HQ, they highlighted how technology can impact utility operations across the value chain. In the article, it identified the four key impact areas where utilities are focused as:

- Driving customer experience

- Mitigating risk/improve safety

- Increasing reliability and operational efficiency

- Building new revenue models

So which data is worth preserving to support one of the four goals outlined above? If the data is not clearly aligned to one of these goals, should it be preserved? If the data is aligned to one of the goals, is it of sufficient quality to be used?

- Data quality

Data quality is largely driven by two inter-related dimensions. First, the systems and applications that support a work-flow process. Second, how the processes themselves are architected. More often than not, how the processes are being executed will determine which data is being captured and in what format. And, if quality of data is critical to support digital transformation initiatives, then it is absolutely critical that utilities implement a process documentation and optimization capability that inventories and optimizes process workflows that impact that data quality.

- Data movement

Finally, one of least understood aspects of all digital transformation initiatives is that for data to be leveraged appropriately, it needs to be accessible and moved to the right resources to support optimal decisions. Whether it is an AI/machine learning algorithm, a human support role (reliability engineer as an example), or a third-party ecosystem provider, it requires a robust highly capable integration and API management capability to move the data in a timely manner across internal/external systems. And this should be independent of whether the system is an on-premises or in a cloud application environment.

Let us show you how we can help you face the music as the data beat goes on, by truly connecting utility digital operations. Click below.